This is the second of three articles examining the natural resource cost of AI infrastructure. Part 1 explored AI’s hidden water problem. Part 3 will address the critical minerals and metals that make it all possible.

When a major technology company announces a new AI data center campus, the conversation almost always centers on the same set of variables: investment size, job creation, tax incentives, and processing capacity. What receives far less attention is the question that will ultimately determine whether that campus can operate as planned for the next two or three decades: where will the power come from?

This is not a trivial question. AI infrastructure is, by a significant margin, the fastest-growing source of electricity demand in the United States and much of the world. The scale of that demand — and the fuels being used to meet it — has consequences that extend well beyond the technology sector, touching electricity bills, grid reliability, emissions trajectories, and the long-term economics of energy infrastructure itself.

Behind the clean language of digital progress, AI runs largely on fossil fuels. Understanding why — and what it means for the developers, investors, and communities involved — requires looking at the energy problem with the same clarity that the AI industry has so far preferred to avoid.

A demand unlike anything the grid has seen

U.S. data centers consumed 183 terawatt-hours (TWh) of electricity in 2024 — more than 4% of the country’s total electricity consumption, and roughly equivalent to the annual electricity demand of Pakistan, according to the International Energy Agency. By 2030, that figure is projected to grow by 133%, reaching 426 TWh. For context, the Lawrence Berkeley National Laboratory estimates data center demand could represent between 6.7% and 12% of all U.S. electricity consumption by 2028 — a range whose width reflects just how uncertain the pace of AI adoption remains.

The scale at the facility level is equally striking. According to the IEA, a typical AI-focused hyperscale data center consumes as much electricity as 100,000 households. The largest facilities currently under construction are expected to use 20 times as much. Meta’s Hyperion project in Louisiana, for instance, will require at least 5 gigawatts to operate, three times the electricity consumption of the entire city of New Orleans.

This is not a demand that can be quietly absorbed into existing grid capacity. It is a structural shift, one that is forcing decisions about power generation, grid infrastructure, and fuel sources that will lock in energy outcomes for decades.

The fossil fuel reality behind the clean energy narrative

Technology companies have made ambitious public commitments to renewable energy. Google, Microsoft, Meta, and Amazon are collectively the largest corporate buyers of clean energy in the world. In 2024 alone, major tech firms accounted for 43% of all clean energy power purchase agreements signed globally.

And yet, according to the IEA, natural gas supplied over 40% of electricity for U.S. data centers in 2024, making it the single largest energy source. Renewables followed at 24%, nuclear at approximately 20%, and coal at around 15%. Globally, the picture is starker: roughly 56% of data center electricity currently comes from fossil fuels (i.e., natural gas and coal), with coal accounting for nearly 30% of the global mix, concentrated primarily in China and parts of Asia.

The gap between corporate sustainability commitments and operational reality is not primarily a question of intent. It is a question of timing and physics. Renewable energy capacity is being added rapidly, but the pace of AI-driven data center construction is outrunning the pace at which clean generation can be connected to the grid. Interconnection queues in regions like PJM (the transmission organization serving the Mid-Atlantic and Midwest) have projects waiting up to eight years for grid connection. In that environment, the fuel that fills the gap is the fuel that is already available: natural gas and, in markets where it remains cost-competitive, coal.

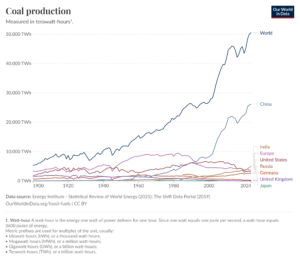

Natural gas is projected to remain the largest source of additional electricity supply for data centers through 2030, adding over 130 TWh of annual generation in the U.S. alone over the next five years. Coal generation, meanwhile, is not declining as anticipated, as illustrated in the chart below. In the first half of 2025, U.S. coal-fired generation was 15% higher than in the same period of 2024, a reversal of the long-term trend toward coal retirement, driven in part by the need to keep reliable baseload generation online as data center demand surges.

A grid under pressure: Reliability, cost, and the limits of existing infrastructure

The electricity demand from AI data centers is substantial. It is structurally different from most industrial loads in ways that make it particularly challenging for the grid to absorb.

Most large energy users have variable demand. Factories ramp up and down, commercial buildings follow daily patterns, and residential consumption peaks in the morning and evening. Data centers, by contrast, run at near-constant, high load around the clock. They cannot easily reduce consumption during peak periods. A hyperscale facility drawing 100 to 500 megawatts places a continuous, inflexible demand on the system — the kind of load that grids, historically designed around variable and predictable patterns, were not built to serve at this scale or at this speed of deployment.

The cost consequences are becoming visible. A Bloomberg analysis found that wholesale electricity now costs as much as 267% more in some areas near significant data center activity than it did five years ago. In the PJM market, data centers contributed to an estimated $9.3 billion price increase in the 2025-26 capacity market, with average residential bills expected to rise by $16 to $18 per month in affected states. A Carnegie Mellon University study estimates that data centers and cryptocurrency mining could lead to an 8% increase in the average U.S. electricity bill by 2030, potentially exceeding 25% in the highest-demand markets of central and northern Virginia.

Beyond pricing, there are emerging signs of physical grid stress. A Bloomberg investigation using data from tens of thousands of sensors found that data center proximity correlates strongly with “bad harmonics”: disruptions to the normal flow of electricity that can damage appliances and, in extreme cases, increase fire risk. In Loudoun County, Virginia, the share of sensors recording problematic harmonic readings was more than four times the national average. The grid, in short, is not absorbing this load without consequence.

The energy infrastructure problem has a subsurface component

Meeting AI’s energy demand requires not just more electricity generation capacity, but the physical infrastructure to deliver it. Much of that infrastructure has a significant subsurface component that is frequently underestimated during site planning.

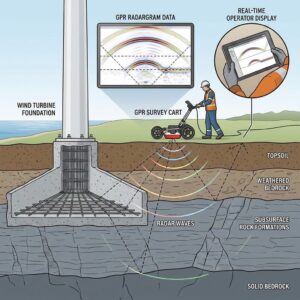

Natural gas plants require pipeline infrastructure, compressor stations, and subsurface geological characterization of the formations through which those pipelines must be routed. Geothermal energy — one of the most promising alternatives for providing reliable, carbon-free baseload power to data centers in suitable geological settings — depends entirely on (deep) subsurface investigation to determine whether a site holds viable heat resources at accessible depths. Even the siting of solar and wind installations, increasingly paired with data centers as dedicated power sources, requires geotechnical assessment of foundation conditions to ensure long-term structural stability.

A grid under pressure: Reliability, cost, and the limits of existing infrastructure

The electricity demand from AI data centers is substantial. It is structurally different from most industrial loads in ways that make it particularly challenging for the grid to absorb.

Most large energy users have variable demand. Factories ramp up and down, commercial buildings follow daily patterns, and residential consumption peaks in the morning and evening. Data centers, by contrast, run at near-constant, high load around the clock. They cannot easily reduce consumption during peak periods. A hyperscale facility drawing 100 to 500 megawatts places a continuous, inflexible demand on the system — the kind of load that grids, historically designed around variable and predictable patterns, were not built to serve at this scale or at this speed of deployment.

The cost consequences are becoming visible. A Bloomberg analysis found that wholesale electricity now costs as much as 267% more in some areas near significant data center activity than it did five years ago. In the PJM market, data centers contributed to an estimated $9.3 billion price increase in the 2025-26 capacity market, with average residential bills expected to rise by $16 to $18 per month in affected states. A Carnegie Mellon University study estimates that data centers and cryptocurrency mining could lead to an 8% increase in the average U.S. electricity bill by 2030, potentially exceeding 25% in the highest-demand markets of central and northern Virginia.

Beyond pricing, there are emerging signs of physical grid stress. A Bloomberg investigation using data from tens of thousands of sensors found that data center proximity correlates strongly with “bad harmonics”: disruptions to the normal flow of electricity that can damage appliances and, in extreme cases, increase fire risk. In Loudoun County, Virginia, the share of sensors recording problematic harmonic readings was more than four times the national average. The grid, in short, is not absorbing this load without consequence.

The energy infrastructure problem has a subsurface component

Meeting AI’s energy demand requires not just more electricity generation capacity, but the physical infrastructure to deliver it. Much of that infrastructure has a significant subsurface component that is frequently underestimated during site planning.

Natural gas plants require pipeline infrastructure, compressor stations, and subsurface geological characterization of the formations through which those pipelines must be routed. Geothermal energy — one of the most promising alternatives for providing reliable, carbon-free baseload power to data centers in suitable geological settings — depends entirely on (deep) subsurface investigation to determine whether a site holds viable heat resources at accessible depths. Even the siting of solar and wind installations, increasingly paired with data centers as dedicated power sources, requires geotechnical assessment of foundation conditions to ensure long-term structural stability.

The U.S. Department of Energy has identified geothermal energy as a significant opportunity to meet data center power demand sustainably, including through Cold Underground Thermal Energy Storage (Cold UTES), a technology that uses the subsurface itself as a thermal reservoir to offset peak cooling demand. These approaches require the same foundational subsurface intelligence that any responsible energy infrastructure project demands: understanding what lies beneath, before committing to what gets built above.

This is where geophysical investigation becomes directly relevant to the energy problem AI has created. Whether a developer is siting a gas-fired peaker plant to serve a hyperscale campus, exploring geothermal potential as an alternative baseload source, or characterizing the geological conditions beneath a co-located solar installation, the subsurface conditions cannot be assumed. It must be understood through geophysical surveys, geological interpretation, and the kind of integrated, site-specific analysis that turns an energy plan from a concept into a viable project.

The path forward: Cleaner, but not without subsurface geology understanding

The long-term energy mix for AI infrastructure will not remain as fossil-fuel-dependent as it is today. The IEA projects that by 2035, the ratio of data center electricity could shift from roughly 60% fossil fuels and 40% clean power to the inverse: 60% clean and 40% fossil. Nuclear energy, including small modular reactors, is increasingly being cited as the most credible path to reliable, carbon-free baseload power for data centers. Several tech companies have already signed agreements with nuclear startups, and plans are underway to revive retired nuclear plants to meet rising data center demand.

But none of these transitions happen without infrastructure. And infrastructure — whether it is a natural gas pipeline, a geothermal well, a transmission corridor, or the foundation of a wind turbine — begins with understanding the ground it will occupy. The energy problem AI has created is, at its root, an infrastructure problem. And infrastructure problems, as any geoscientist or engineer will tell you, always have a subsurface component.

In the near term, the honest reality is that fossil fuels will continue to power AI’s growth. The question for developers, investors, and infrastructure planners is not whether to engage with that reality, but how to navigate it responsibly, securing reliable energy access while positioning projects for the cleaner grid that will eventually arrive. That navigation requires better information at every stage: better subsurface data for site characterization, better geological interpretation, and better integration of geoscience into the infrastructure planning process.

Energy infrastructure starts in the ground

AI’s energy problem is real, and it is not going away. The demand is too great, the infrastructure too slow to build, and the grid too unprepared for a surge of this speed and scale. But the response to that problem (whether it takes the form of new gas generation, geothermal development, nuclear power, or co-located renewables) will be built, in every case, on ground that must first be understood geologically.

That understanding begins with geophysical investigation: non-invasive, science-driven, and capable of providing the subsurface clarity that energy infrastructure planning requires before commitments are made and capital is deployed.

The AI servers don’t stop. Neither should the geological due diligence behind the power that keeps them running.

Next in the series: AI’s Critical Minerals Problem — the metals and materials that make AI hardware possible, and the geological and environmental cost of extracting them.

Does your energy infrastructure project start with solid subsurface knowledge?

Whether you are planning a natural gas facility, exploring geothermal potential, or assessing foundation conditions for a co-located renewable installation, CGS brings certified geoscience expertise, a multi-method geophysical approach, and deep familiarity with the geological environments of Texas, the broader US Southwest, and the US-Mexico corridor.